MiniMax M2.1 LLM Models

MiniMax M2.1/M2 LLM is a long-context foundation model built with a hybrid architecture combining lightning attention, standard attention, and Mixture-of-Experts layers. It’s designed for efficient inference, strong reasoning, and handling extremely long inputs—scaling up to millions of tokens.

Explore the Leading MiniMax M2.1 LLM Models(2)

MiniMax-M2.1 is a lightweight, state-of-the-art large language model optimized for coding, agentic workflows, and modern application development. With only 10 billion activated parameters, it delivers a major jump in real-world capability while maintaining exceptional latency, scalability, and cost efficiency.

MiniMax M2.1

MiniMax-M2 is a lightweight, state-of-the-art large language model optimized for coding, agentic workflows, and modern application development. With only 10 billion activated parameters, it delivers a major jump in real-world capability while maintaining exceptional latency, scalability, and cost efficiency.

MiniMax-M2

What Stands Out - MiniMax M2.1 LLM Models

Frontier-Scale Reasoning

State-of-the-art language models built for deep reasoning, complex problem-solving, and multi-step planning.

Ultra Long-Context Understanding

Lightning-style attention and optimized architecture enable MiniMax models to process and retain long contexts,

Cost-Efficient MoE Performance

Mixture-of-Experts designs deliver high intelligence, low latency, and significantly better price-performance.

Versatile Model Family

From powerful general-purpose models to coding- and agent-optimized variants.

Enterprise-Ready Reliability

Stable, scalable infrastructure with monitoring and safety for production use.

Open & Developer-Friendly

Rich APIs, SDKs, and open-weight releases give builders flexibility to integrate, fine-tune, or self-host.

What You Can Do - MiniMax M2.1 LLM Models

Build advanced assistants with strong reasoning and long-context understanding.

Accelerate coding workflows using MiniMax-M2 for fast generation and debugging.

Run long-document and multi-file tasks with MiniMax’s ultra-long context capabilities.

Optimize cost and speed through MiniMax’s efficient MoE architecture.

Apply MiniMax models to finance, compliance, analytics, and enterprise automation.

Deploy open-weight MiniMax models for customizable and self-hosted AI solutions.

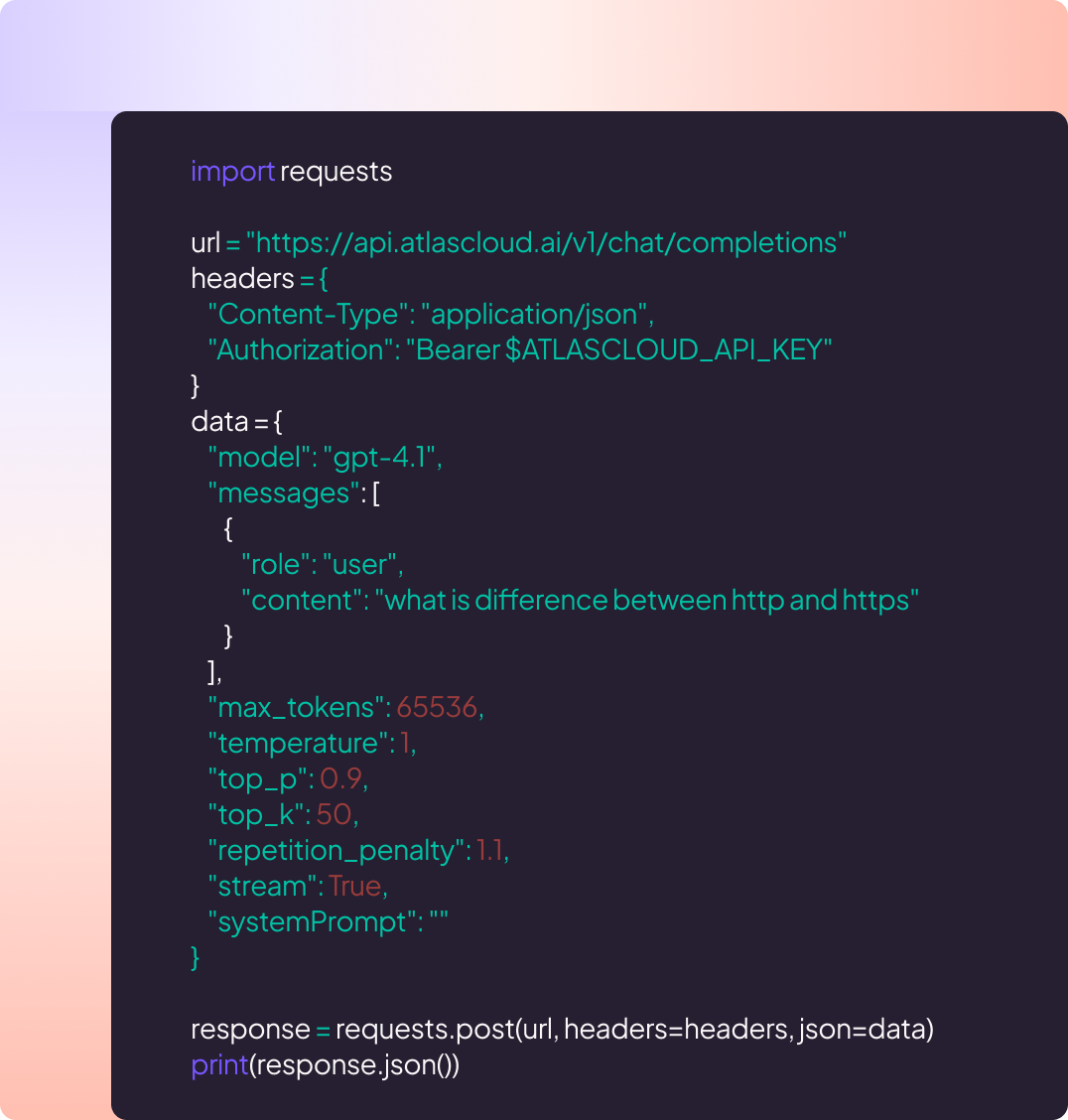

Why Use MiniMax M2.1 LLM Models on Atlas Cloud

Combining the advanced MiniMax M2.1 LLM Models models with Atlas Cloud's GPU-accelerated platform provides unmatched performance, scalability, and developer experience.

MiniMax’s M2.1/M2 frontier-scale reasoning and ultra-long memory for limitless understanding.

Performance & flexibility

Low Latency:

GPU-optimized inference for real-time reasoning.

Unified API:

Run MiniMax M2.1 LLM Models, GPT, Gemini, and DeepSeek with one integration.

Transparent Pricing:

Predictable per-token billing with serverless options.

Enterprise & Scale

Developer Experience:

SDKs, analytics, fine-tuning tools, and templates.

Reliability:

99.99% uptime, RBAC, and compliance-ready logging.

Security & Compliance:

SOC 2 Type II, HIPAA alignment, data sovereignty in US.

Explore More Families

MiniMax M2.1 LLM Models

MiniMax M2.1/M2 LLM is a long-context foundation model built with a hybrid architecture combining lightning attention, standard attention, and Mixture-of-Experts layers. It’s designed for efficient inference, strong reasoning, and handling extremely long inputs—scaling up to millions of tokens.

GLM LLM Models

The Z.ai GLM LLM family pairs strong language understanding and reasoning with efficient inference to keep costs low, offering flexible deployment and tooling that make it easy to customize and scale advanced AI across real-world products.

Seedance 1.5 Video Models

Seedance is ByteDance’s family of video generation models, built for speed, realism, and scale. Its AI analyzes motion, setting, and timing to generate matching ambient sounds, then adds creative depth through spatial audio and atmosphere, making each video feel natural, immersive, and story-driven.

Moonshot LLM Models

The Moonshot LLM family delivers cutting-edge performance on real-world tasks, combining strong reasoning with ultra-long context to power complex assistants, coding, and analytical workflows, making advanced AI easier to deploy in production products and services.

Wan2.6 Video Models

Wan 2.6 is Alibaba’s state-of-the-art multimodal video generation model, capable of producing high-fidelity, audio-synchronized videos from text or images. Wan 2.6 will let you create videos of up to 15 seconds, ensuring narrative flow and visual integrity. It is perfect for creating YouTube Shorts, Instagram Reels, Facebook clips, and TikTok videos.

Flux.2 Image Models

The Flux.2 Series is a comprehensive family of AI image generation models. Across the lineup, Flux supports text-to-image, image-to-image, reconstruction, contextual reasoning, and high-speed creative workflows.

Nano Banana Image Models

Nano Banana is a fast, lightweight image generation model for playful, vibrant visuals. Optimized for speed and accessibility, it creates high-quality images with smooth shapes, bold colors, and clear compositions—perfect for mascots, stickers, icons, social posts, and fun branding.

Image and Video Tools

Open, advanced large-scale image generative models that power high-fidelity creation and editing with modular APIs, reproducible training, built-in safety guardrails, and elastic, production-grade inference at scale.

Ltx-2 Video Models

LTX-2 is a complete AI creative engine. Built for real production workflows, it delivers synchronized audio and video generation, 4K video at 48 fps, multiple performance modes, and radical efficiency, all with the openness and accessibility of running on consumer-grade GPUs.

Qwen Image Models

Qwen-Image is Alibaba’s open image generation model family. Built on advanced diffusion and Mixture-of-Experts design, it delivers cinematic quality, controllable styles, and efficient scaling, empowering developers and enterprises to create high-fidelity media with ease.

Open AI Model Families

Explore OpenAI’s language and video models on Atlas Cloud: ChatGPT for advanced reasoning and interaction, and Sora-2 for physics-aware video generation.

Hailuo Video Models

MiniMax Hailuo video models deliver text-to-video and image-to-video at native 1080p (Pro) and 768p (Standard), with strong instruction following and realistic, physics-aware motion.

Solo su Atlas Cloud.