The AI video generation market has changed dramatically. In 2024, we only had blurry 15-second clips. By early 2026, AI video APIs have grown into a mature, production-ready ecosystem. The future of AI video 2026 is clear. We are finally moving past random generation and stepping straight into absolute directorial control.

The Evolution of AI Video APIs (Tiers 1-5)

The evolution of AI video APIs follows a simple progression: Production → Control → Direction.

Each new tier does not replace the older ones. Honestly, it just absorbs the previous tier and adds a whole new dimension of creative control.

Tier 1: Text-to-Video – The Proof-of-Concept Era

Function: You type a prompt, and the model spits out a video.

Importance: This sparked the entire generative video boom. It proved that machines could simulate motion.

Limitations: It was incredibly unpredictable. We had practically zero temporal stability.

API View: Very simple. Developers just sent a POST request with a basic text string to the endpoint.

Tier 2: Image-to-Video – Anchoring Reality

Function: You upload a starting image, and the model animates it based on your prompt.

Key Leap: This was our first real taste of anchoring reality. Starting with an image finally gave us a reliable way to maintain character consistency—at least for the first few seconds of a clip.

Limitations: The background still warped heavily. If you pushed the motion too far, the physics broke down completely.

API View: The payload expanded. APIs now required an image_url parameter alongside the text prompt, forcing developers to manage media hosting before calling the video model.

Tier 3: Video-to-Video – Transformation as a Basic Element

Function: You feed a source video into the API, and the AI reskins it entirely.

Importance: This let creators shoot a rough scene on their phones and turn it into a high-budget sci-fi shot. It locked down the structural motion.

API View: This is where infrastructure got heavy. API calls required chunked uploads for large video files. Developers had to start thinking about webhooks because processing these requests took minutes, not seconds.

Tier 4: Controlled Generation – Giving Developers the Lens

Function: The API allows fine-grained control over how the virtual camera behaves inside the generated scene.

Control Parameters: We finally got Camera movement control (Dolly/Pan), tilt, zoom, and tracking shots.

Developer Turning Point: We stopped getting random, dizzying spinning cameras. If a client wanted a slow push-in on a product, developers could actually code that specific instruction.

API View: API payloads became structured JSON objects. Instead of just a prompt, you now pass camera_motion: { pan: "left", speed: 0.5 }and a motion_bucket_id to strictly limit how much the background moves.

Tier 5: Cinematic Director – The 2026 Frontier

Function: You don't just generate a shot anymore. You plan and direct a multi-shot scene with physics-aware generation and synchronized sound.

Key Difference: It feels like working with a digital film crew. You command lighting, focus pulls, and actor blocking.

Key Leap: The shift to true directable AI powered by multimodal AI architectures. The models now understand audio cues, text, and storyboard sketches simultaneously.

API View: Deeply complex. Endpoints now accept a scene_graph array. You can pass timeline markers, audio sync cues, and specific character reference IDs across multiple generation calls to ensure the actor looks identical in every single shot.

Top AI Video APIs and API Specialization Directions

| Model | Official Company | Capability Tier | Native API Architecture | Core Capability | Best For Users | Input Type | Output Quality | Scene Control | Character Consistency | Narrative Logic | Editing & Post | Pricing Model | Dev Experience | Latency/Throughput |

| Sora 2 | OpenAI | Tier 5 | REST/Websockets | Photorealism | Filmmakers | Text, Image, Audio | Cinematic 4K | Granular | Perfected | High | API-native editing | High/Per-sec | Complex but robust | Medium / High |

| Gen-4.5 | Runway | Tier 4/5 | RESTful | Camera movement control (Dolly/Pan) | Creators, Devs | Text, Image, Video | 4K | Granular | Very High | Medium | Top-tier | Subscription + Usage | Excellent SDKs | Low / High |

| Veo 3.1 | Tier 5 | gRPC/REST | Storyboard to Video | Agencies, Studios | Multimodal | 4K | Medium | High | Excellent | Moderate | Token/Compute | Enterprise-focused | Medium / Very High | |

| Kling 3.0 | Kuaishou | Tier 4 | RESTful | Physics & Motion | Volume Creators | Text, Image | 1080p/4K | High | High | Low | Basic | Very Low/Per-gen | Clean, easy | Very Low / Massive |

| Seedance 2.0 | ByteDance | Tier 4 | RESTful | Native Audio Sync | Social Marketers | Text, Audio | 1080p vertical | Moderate | Moderate | Low | Auto-captions | Usage-based | Good | Low / Massive |

| Wan 2.7 | Alibaba | Tier 4 | RESTful | Product locking | E-commerce | Image, Text | 4K | High | Absolute (Products) | Low | Moderate | Usage-based | Needs work | Medium / High |

Detailed Model Breakdowns

- Sora 2 (OpenAI): A pivotal story for 2026. OpenAI shut down the standalone Sora app and API on March 24, but it powers the best AI cinematic directing tools today. The big technical leap here is the "Director's Mode" endpoint. It offers incredible temporal stability.

- Gen-4.5 (Runway): Hit the market in late 2025. Runway is still the king of granular editing. Developers absolutely love their clean documentation.

- Veo 3.1 (Google): Launched Q1 2026. Google focused deeply on multi-shot narrative logic. You can pass an entire script into the API, and it automatically builds out a cohesive scene.

- Kling 3.0 (Kuaishou): The biggest surprise of early 2026. They triggered a massive API price war. The physics simulation is rock solid, and the throughput is just insanely fast.

- Seedance 2.0 (ByteDance): Rolled out recently specifically for social marketers. The native audio-sync features totally eliminate the need for external voiceover APIs.

- Wan 2.7: Arriving right now in early 2026. Alibaba built this specifically for retail. You can lock in 3D product details perfectly.

The "Cinematic Director" Frontier

Before 2025, AI video APIs basically just generated isolated, slightly unpredictable video clips. By 2026? They can actually direct how an entire scene is shot. It feels less like coding and more like running a virtual film set.

Camera as a First-Class Parameter

You don't just type "camera moves" in a text box anymore. You pass actual cinematography data. API endpoints now use precise parameter naming. They accept commands like lens_type: "35mm" or angle: “low_angle_tracking”. We finally have strict Camera movement control (Dolly/Pan) built directly into the API payload.

Character and Subject Consistency Across Shots

You just assign a character_id seed in your API calls. The model automatically references those exact embeddings across multiple requests. Flawless character consistency is finally a solved problem.

Multi-Shot Sequences and Scene Graphs

Developers are currently building full storyboard-to-video workflows. By pushing a JSON scene graph to a new "Video Compilation" endpoint, you can string five different camera angles together. The API actually understands the physical space between the shots.

Motion and Timing Control

Motion isn't just "fast" or "slow" anymore. We use custom speed curves now. You can define specific key points in the API to perfectly time an action with an audio beat. Duration control is exact down to the exact frame, guaranteeing your audio sync never drifts.

Style and Aesthetic Locking

API control now includes actual color grading configurations and precise film simulations (like 16mm or 35mm grain). You set your aspect ratio, lock the lighting angle, and the model holds that aesthetic perfectly.

Prompt Language Is Evolving into Directorial Language

We aren't really writing "prompts" anymore. We are writing shot lists. The concept of prompting has completely evolved into true directable AI. Instead of "a happy dog running," you send strict directorial language to the API, defining the exact lens angle and actor blocking.

Commercialization and Applications

Who is actually paying for these AI video APIs today? Everyone. But their reasons vary wildly.

Marketing & Advertising Teams

Needs & Pain Points: Agencies need hyper-localized ads fast, but physical video shoots are just too expensive.

API Features They Care About: They love native audio-sync capabilities.

Outlook for 2026: Ads will dynamically change actors based on who is watching.

E-commerce & Retail

Needs & Pain Points: Showing products in motion drives massive sales. But if a dress suddenly warps in the video, it kills buyer trust.

API Features They Care About: Absolute product locking.

Outlook for 2026: We will see real-time, dynamic try-on videos generated directly on product pages.

Game Studios & Interactive Media

Needs & Pain Points: Traditional 3D rendering for cutscenes takes weeks of studio time.

API Features They Care About: They obsess over strict temporal stability and spatial control.

Outlook for 2026: Expect live, real-time video textures rendering directly inside game engines.

Independent Filmmakers & Content Creators

Needs & Pain Points: They want blockbuster aesthetics but lack the Hollywood crew.

API Features They Care About: Advanced AI cinematic directing tools and granular camera movement control.

Outlook for 2026: The first purely API-generated indie feature film will win a major festival this year.

News Media & Publishers

Needs & Pain Points: Breaking news needs fast visual context. Stock footage is getting really boring.

API Features They Care About: Ultra-low latency and strict factual prompt adherence.

Outlook for 2026: Fully automated, daily video news digests generated entirely from text articles.

EdTech & Training Platforms

Needs & Pain Points: Students ignore static slideshows. But making highly engaging video modules is hard.

API Features They Care About: Flawless character consistency to build reliable, recognizable AI tutors.

Outlook for 2026: Adaptive video lessons that automatically rewrite and re-render themselves if a student gets confused.

SaaS Developers & Platform Builders

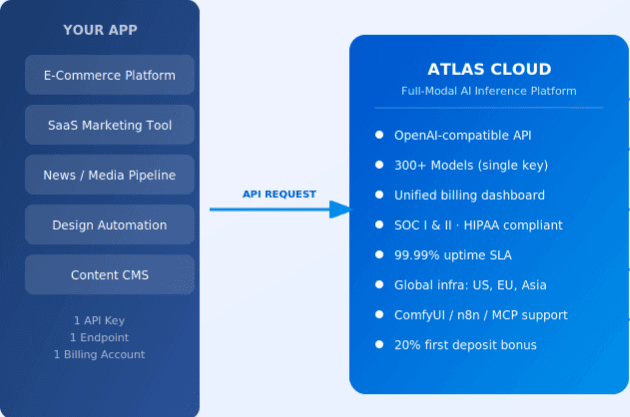

Needs & Pain Points: Embedding video creation tools is tough. Managing five different vendor API keys is a complete nightmare.

API Features They Care About: High throughput, reliable webhooks, and unified management endpoints.

Outlook for 2026: Relying on an AI video aggregator API platform will become the absolute industry standard.

Integration Patterns for Developers

Building apps with AI video APIs isn't like querying a normal text database. Video rendering takes actual time. Let me show you how smart developers are actually wiring this stuff up in 2026.

Asynchronous-First Architecture

If you keep an HTTP connection open for three minutes while rendering a 4K video, the server will time out. You absolutely must build an asynchronous architecture from day one.

Webhooks vs. Polling

Polling the endpoint every five seconds just wastes your compute and risks rate limits. Webhooks are the better way to go.

Chaining Models into Pipelines

To achieve a true Cinematic Director workflow, you rarely use just one model.

The standard pipeline looks like this: Text Prompt → LLM Optimization → Image Generation → Image-to-Video → Audio Sync → Subtitle Overlay.

Every single stage here is one API call. The output of the previous stage becomes the direct input for the next. But here is the catch. Building this pipeline across five different vendors means you are managing 5 API keys, 5 separate billing dashboards, and 5 wildly different SDKs. This is exactly why using an aggregator platform is becoming totally essential.

Error Handling and Retry Strategies

Sometimes, generations just randomly fail. Maybe a server drops the ball, or a prompt triggers a strict safety filter. You need smart retry logic. Don't just blindly loop the exact same request. Add a slight prompt variation before retrying to avoid hitting the exact same error.

Cost and Latency Optimization

Different models have very different costs per second and generation times.

You should use fast, low-cost models for rough user previews. Once the user approves the shot, you switch to high-cost models for the final cinematic render. If you use a unified API layer, you can implement this exact model-switching logic without modifying your core application code at all.

Streaming vs. Batch Processing

If you need 50 localized ads by tomorrow, just use batch processing endpoints to save money. But if you need instant gratification, we are finally seeing true streaming endpoints. They let the user watch the first few frames while the rest of the video still renders in the background.

What is an AI Video Aggregator API?

An AI video aggregator API is a unified infrastructure layer that allows developers to access, chain, and switch between multiple generative video models (like Sora 2, Kling 3.0, and Seedance 2.0) using a single SDK, one API key, and consolidated billing.

Summary: AI Video Aggregator API Platform as a Strategy

Relying on an AI video aggregator API platform Atlas Cloud is easily the smartest strategy to handle the future of AI video 2026.

Cost Optimization & Unified Billing: You get exactly one invoice at the end of the month. You can easily route cheap preview tasks to fast models, saving your budget for expensive final renders.

Fallback Services: If a vendor’s server crashes mid-render, developers can switch to another model within the aggregator.. You basically get zero downtime.

Stacking Advantages & Unified Management: You can combine the native audio of one model with the visual physics of another. It gives you incredible architectural convenience through just one single Atlas Cloud SDK.

plaintext1Your Application 2 │ 3 ▼ 4 Atlas Cloud API ────── Unified authentication, billing, and monitoring 5 │ 6 ├── DeepSeek (V3, Coder) 7 ├── Alibaba (Qwen, Qwen-Image) 8 ├── ByteDance (Seedream, Seedance, Kling) 9 ├── Black Forest Labs (FLUX) 10 ├── MoonshotAI (Kimi) 11 ├── MiniMax (Hailuo) 12 ├── Luma AI (Video) 13 ├── Zhipu AI (GLM) 14 └── ... 20+ more providers

FAQ

Which AI video APIs offer the best cinematic control in 2026?

I would definitely keep an eye on Wan 2.7 if you are heavily focused on e-commerce aesthetics.

How do I choose the right AI video API for my application?

It completely depends on your users. If they need fast, cheap social clips, use a high-throughput model. If they need perfect structural logic, use something heavier.

Can we convert ordinary videos into cinematic videos using AI APIs?

Absolutely. Tier 3 video-to-video endpoints let you upload basic phone footage and completely reskin it. The AI perfectly locks the underlying motion and transforms the style.

Ready to build the next generation of cinematic AI apps? [Get your Atlas Cloud API key right here] and start testing our cinematic generation features today. We even throw in a few test credits so you can run your first multi-shot pipeline on us.